Toast → GHL Pipeline — Step-by-Step Diagnostic

auth

location

Download

CSV

check

clean names

dedup

upsert

workflow

ledger

notify

The session cookies (auth0, TOAST_SESSION, etc.) are loaded from toast_session.json and refreshed on each run. session.ensure_session() handles re-login if cookies expire.

session.py · ensure_session(headless=True)What it's supposed to do: click the top-left location switcher in Toast UI, type the target restaurant name, and select it. The page should then show that restaurant's guestbook.

What actually happens: the dropdown click visibly opens, the right item is selected, and the page header updates to show the new location. But Toast's server-side "active restaurant" doesn't actually change. The next export reads the OLD context.

The cyclic shift in today's seed runs

(GG's data)

(IC's data)

(Pasadena's data)

(BP's data)

Each location reliably gets the NEXT location's data. Wrapping bug — confirmed via direct API calls too.

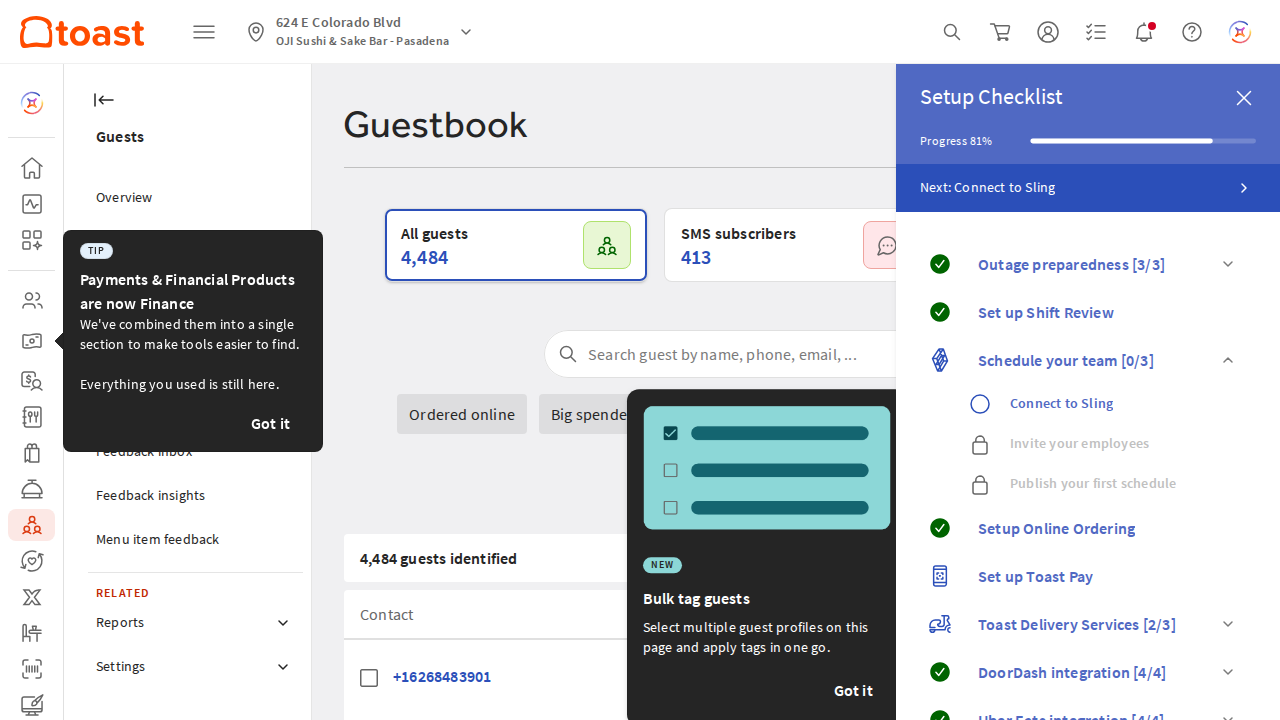

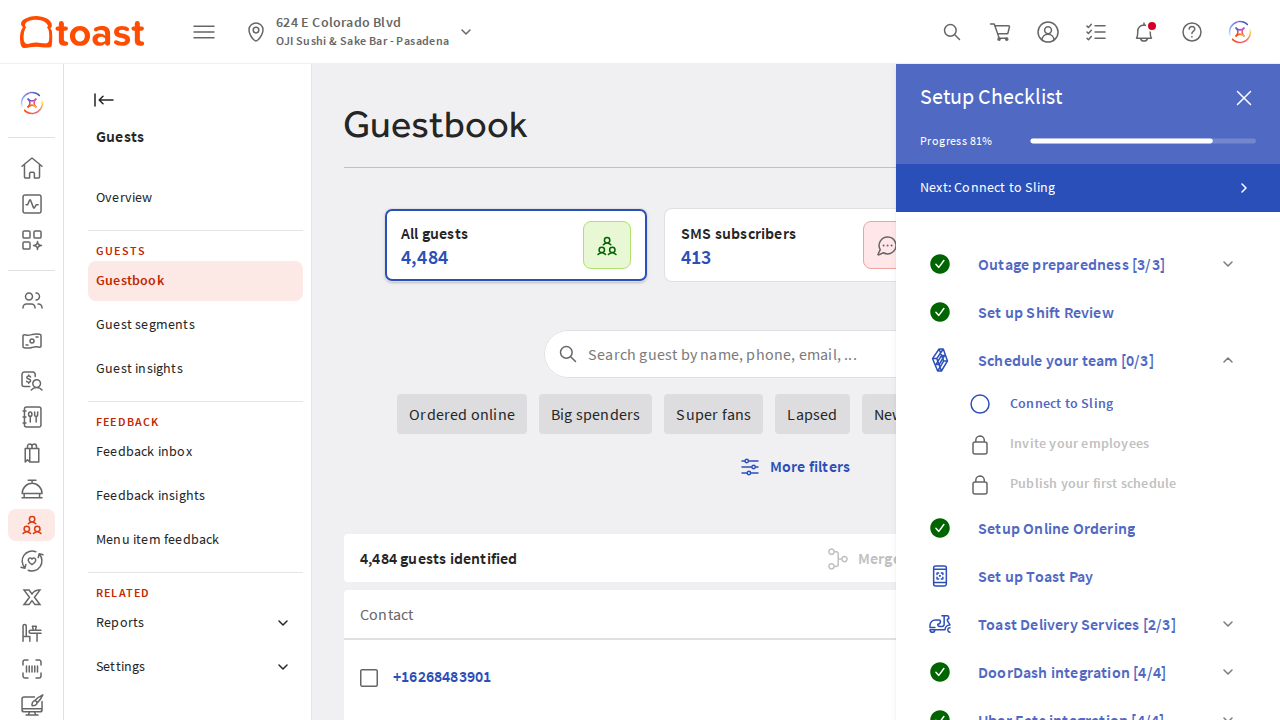

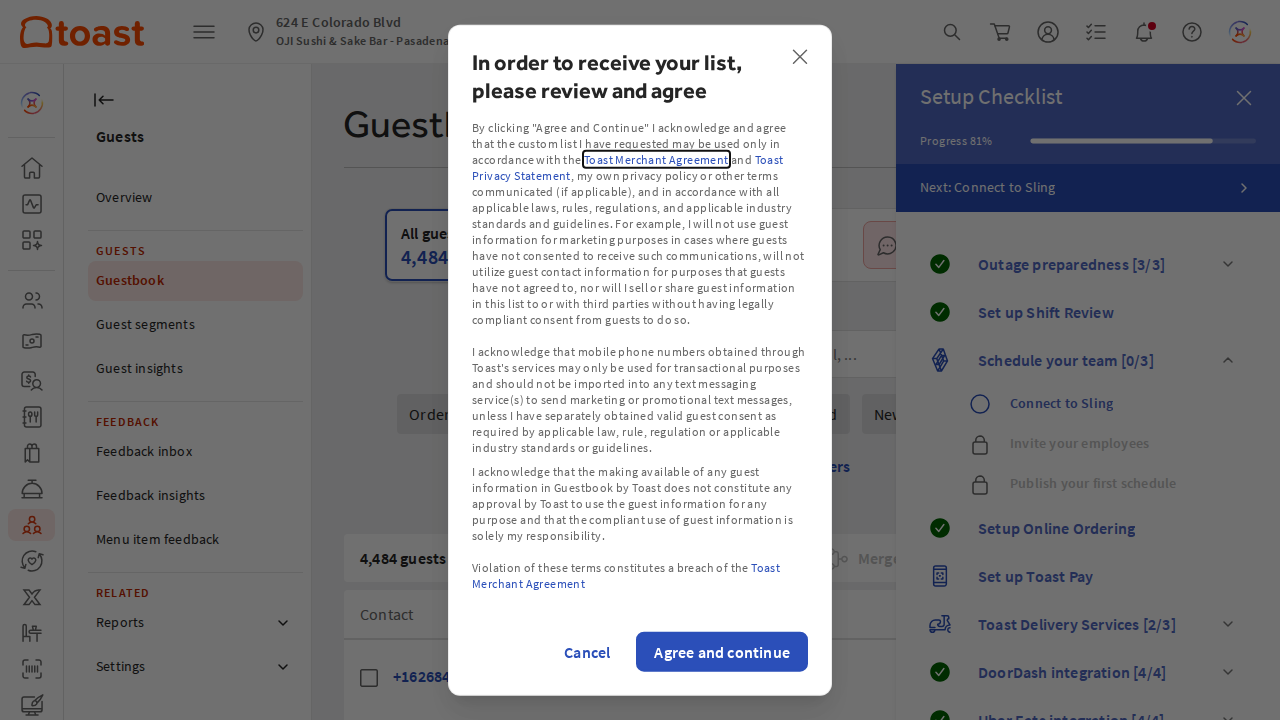

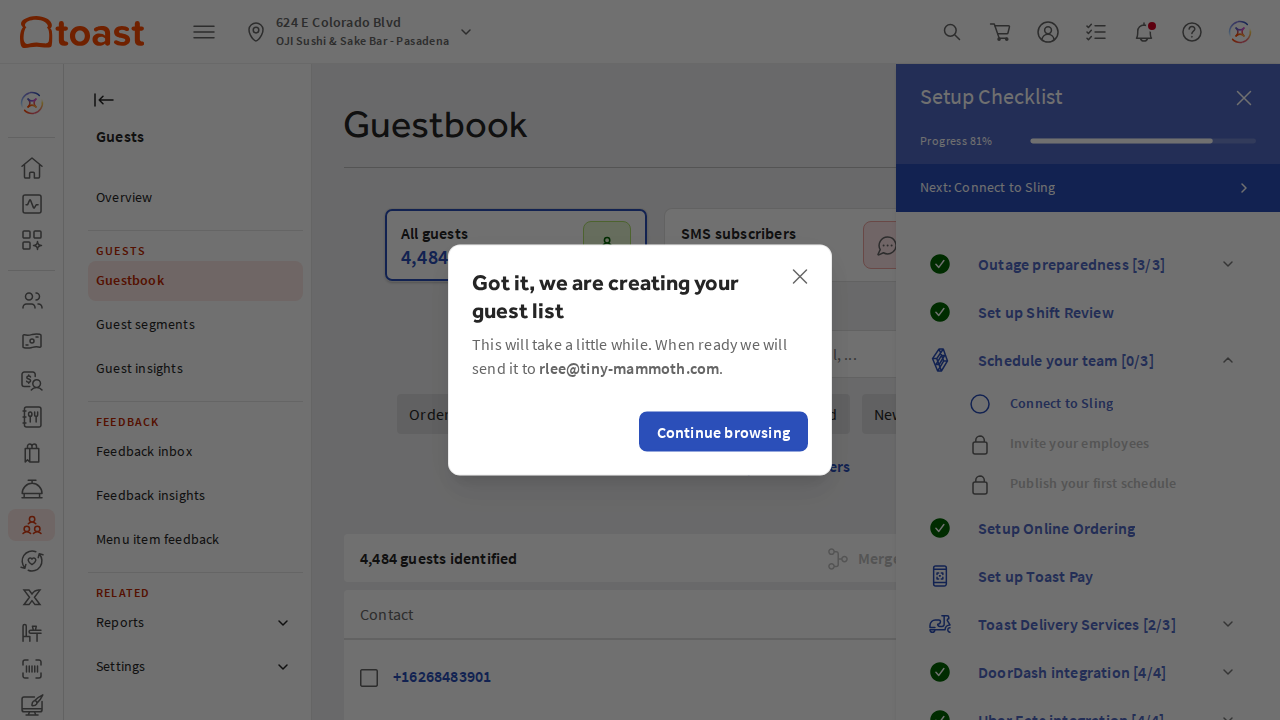

trigger_toast_export.py · switch_toast_location()restaurantGuidList and the toast-restaurant-external-id header, AND the API-returned reportUUIDs never appear in any actual email — the export just doesn't fully fire for our requests.Screenshots from the most recent diagnostic seed run (Pasadena iteration):

→ The UI looks fine at every step. The bug only reveals itself when the actual CSV file content is checked against the expected row count baseline. Click any image to enlarge.

The Download button click + "Agree and continue" modal handling work fine. Toast accepts the click, queues an export, and emails the link within ~30-90s.

This step is downstream of Step 2 — if Step 2 silently set the wrong location, this faithfully exports that wrong location.

trigger_toast_export.py · button-finding evaluate + Agree modal handlingPolls rlee@tiny-mammoth.com via IMAP for emails with subject "Your Guestbook contacts are ready to be downloaded". Filters by:

triggered_aftertimestamp — skips emails that pre-date this triggerexclude_uuids— skips UUIDs already consumed in this run

Hardened today against IMAP connection drops + bad Date headers.

fetch_toast_link.pyNavigates to ?downloadReportUUID=<uuid> in Playwright with accept_downloads=True. Saves the ZIP, extracts the CSV.

Mechanically reliable — but downstream of the same wrong-location bug. The downloaded file is whatever Toast generated.

download_toast_csv.pyCompares the downloaded CSV's row count to the expected baseline per location, with a ±30% tolerance band. This is what's protecting your GHL accounts from getting wrong-location data uploaded.

| Location | Expected | Tolerance band | Today's seed got | Result |

|---|---|---|---|---|

| Kaju Buena Park | 4,476 | 3,133 – 5,818 | 12,668 | aborted ✓ |

| Kaju Garden Grove | 12,661 | 8,862 – 16,459 | 21,683 | aborted ✓ |

| Kaju Irvine Culver | 21,682 | 15,177 – 28,186 | 13,425 | aborted ✓ |

| Oji Sushi Pasadena | 13,423 | 9,396 – 17,449 | 4,484 | aborted ✓ |

All 4 mis-routings caught. Zero bad data reached GHL from the seed runs.

process_and_upload.py · assert_row_count_matches()Parses the Toast CSV. Filters by lastVisitDate against the location's checkpoint. Dedupes within-batch by phone (Toast can repeat the same phone across rows). Cleans junk names from card swipes.

Junk-name examples that now fall back to "Kajufam" / "Ojifam":

'Visa | Cardholder' → 'Kajufam' # 405 rows in BP CSV 'Valued Customer | '' → 'Kajufam' # 57 rows 'Discover | Cardmember' → 'Kajufam' # 11 rows '10m??' → 'Kajufam' 'Mike' → 'Mike' # real names preserved 'Sarah | Cardholder' → 'Sarah' # first-name still real

process_and_upload.py · load_and_clean_csv() + clean_first_name()Each candidate phone gets bucketed against the per-location ledger (uploaded_phones.json):

- NEW — phone not in ledger → enroll

- RE-ENTRY — in ledger, last_enrolled ≥ 180 days ago → enroll, increment count

- COOLDOWN — in ledger, last_enrolled < 180 days ago → skip

Verified end-to-end against the local BP CSV: 130 rows with phone → 113 unique phones → first run all NEW → second run all 113 cooldown-skipped.

process_and_upload.py · bucket_contacts() · state.record_enrollment()POST https://services.leadconnectorhq.com/contacts/upsert with each location's API key + locationId. Creates new contacts or updates existing ones (matched by phone). Returns the GHL contactId.

process_and_upload.py · upsert_contact()POST /contacts/{contactId}/workflow/{workflow_id} — enrolls each contact in their location's "2b Meals Momentum" workflow. The workflow then fires the SMS sequence (Day 1, Day 3, Day 7, etc.).

This is what's currently mis-firing dupes. Today we triggered ~2,036 enrollments across 3 runs (midnight + 10:27 AM + 11:47 AM) — about ~1,434 of those are duplicates of contacts who got enrolled earlier in the day. Each duplicate enrollment runs the WHOLE sequence again.

process_and_upload.py · add_to_workflow()After each location: writes the max lastVisitDate to run_state.json (date checkpoint) and adds enrolled phones to uploaded_phones.json (ledger). The CI workflow commits both files back to master with [skip ci] after each run.

state.py · save_state() · save_ledger()Posts a per-location summary to #system-notifications via the TM Notify worker. Format includes total guest rows, % with phone, % with email, new vs re-entry counts, cooldown-skipped count.

process_and_upload.py · notify()| Run | Time (PT) | BP | GG | IC | Pasadena | Notes |

|---|---|---|---|---|---|---|

| Local midnight | 00:00–00:19 | — | 274 ✗ | 229 ✗ | 229+241 ✗ | Pasadena ran twice; BP missed |

| GH Action morning | 10:27 | 131 ✗ | 147 ✗ | 185 ✗ | 141 ✗ | "Successful" but dupes of earlier batches |

| GH Action 11:47 | 11:47 | ~130 ✗ | ~145 ✗ | ~184 ✗ | (failed) | Triggered all again before crashing on Pasadena |

| Seed run #1 | 12:50 | aborted | aborted | aborted | aborted | Row count gate caught all 4 mis-routings |

| Seed run #2 | 13:08 | aborted | aborted | aborted | aborted | Same — direct dropdown click didn't fix it |

| Seed run #3 | 13:34 | aborted | aborted | aborted | aborted | 15s wait + diagnostic — page stuck on BP |

| Seed run #4 | 14:52 | aborted | aborted | aborted | aborted | Playwright native click — same cyclic shift |

Estimated state of GHL right now:

| Location | Total enrollments today | Likely dupes | Workflow steps still firing? |

|---|---|---|---|

| BP | ~261 | ~130 | YES |

| GG | ~566 | ~420 | YES |

| IC | ~598 | ~414 | YES |

| Pasadena | ~611 | ~470 | YES |

| Total | ~2,036 | ~1,434 |

The cleanup script cleanup_today_enrollments.py exists locally on a feature branch but was never merged or executed. It needs the filter narrowed (dateAdded instead of dateUpdated) before running.

The verdict — where to focus

The misfiring step is #2 (Switch Toast Location). Everything downstream works mechanically; everything upstream works fully. The bug is server-side at Toast and not solvable from the client today.

Two unblocking moves:

1. Stop the bleeding — narrow + run cleanup_today_enrollments.py to remove the ~1,434 dupe workflow enrollments still firing SMS.

2. Today's 4/10–4/25 rerun — manual export of 4 CSVs from Toast UI (one click each), I process them deterministically. Bypass step 2 entirely for tonight.

3. Long-term — file a Toast support ticket asking for the proper API to specify location for export. The dropdown's behavior is a Toast UX choice we can't override.